#Day_01

- Set realistic goals

- Know what exactly we need to learn

- Join a course

- Join a forum/community to discuss

- Have a mentor

- Make use of social media by following relevant people and content

- Start a pet project

#Day_02

How a business analyst looks at data?

- Consumer data, Analytics data, Product data, Marketing data etc

How a software engineer looks at data?

- As different types like int, float, string, char, boolean etc.

How a data engineer looks at data?

- As structured, semi structured and unstructured data.

How a machine learning engineer looks at data?

- Numerical, Categorical, Time series and Text

How a tester looks at data?

- Needs to have understanding of all of the above:-)

#Day_03

- Note down the assumptions before starting.

- Understand the scope well - what to test and what not.

- Do not look for temple to start test planning or writing test cases. Create it as per your product understanding and take feedback from team.

- Start learning about the application. For a complete new application start exploring the requirements and build your understanding. You can also compare the application with similar application already available. If you are testing already existing application, start exploring the same.

- Start understanding about how an end user is going to use the application and make notes.

- Testing is not one person's responsibility. Start thinking around building quality mindset in team - who owns quality, how to test, what is the quality goal? Thinking and initiating discussions arounds those can being value addition to the table.

- Start identify test ideas and help the team to incorporate those in right layer (testing pyramid). Automating right tests at right place can save lots of time and help in preventing defects.

- Look for overall quality umbrella not just the functional aspects. It is important to identify what to test before how to test.

- Seek help and guidance from the team whenever needed.

#Day_04

- Valid

- Invalid

- Requirement

- Design/Logic

- Code

- Data

- Environment

- Configuration

- 3rd party

- Security and permission

- Functional Business Logic

- UI/UX

- Performance

- Security

- Configuration

- Localisation

- Accessibility

- Usability

- Working as designed

- Duplicate

- Not reproducible

- Out of scope

- Enhancement

- BDD is a process which encourages collaboration across roles to build shared understanding of any problem statement based on behaviour. On the other hand Cucumber is an independent framework which support BDD.

- We can use discovery workshop to understand the desired behavior of a feature to be implemented. There are few models which can be used in discovery workshop like Feature mapping, Example mapping and OOPSI mapping.

- BDD process helps to come up with an executable specification which can help to demonstrate the requirement we are building in an automated way. Cucumber can be used to automate the executable requirement but not mandatory. We can automate the executable specification using any framework not just cucumber.

- If we are automating existing test cases using cucumber framework, its not BDD. Instead of help, this can be an extra overhead.

- Converting already automated test cases into cucumber framework is something can never add any value. Think before taking that step.

- If multiple people/teams working in an automation project, it may be nightmare to maintain the feature files written by different people in different format. We can use template to generate those so that we don’t have duplicate statements having the same meaning.

- We do not need to add cucumber on top of frameworks which are written using DSL. For example, using cucumber with rest assured is just an extra layer adding to the framework which may not add value but an overhead.

#Day_06

#Day_07

- Complete independent test where each test is responsible for creating the state needed for the test to start and bringing back the test to the same state once done. This strategy ensures that each test is atomic and does not depend on the outcome of another test. We can create the data dependencies by DB seeding script or using api calls. We can also follow test specific naming conversion on the generated data so that it is easy to clean up once execution completes. This is a good approach which makes tests more flexible and allows parallel execution. But this approach has limitations too. Since test needs to perform the setup and teardown, that will add some extra time to the total execution time for the test.

- Each test can have the capability to decide and adapt to a particular state based on the environment we are running the tests. In this approach , we coupled the test data to specific environment and load the test data based on configuration. This is more applicable when we need to run same set of test on multiple environment and data needed kind of differs based on environment. This approach adds dependency on maintaining multiple set of data at a single point of time.

- Tests are tightly coupled with known sequence of execution. This is not a recommended practice and can be useful only in certain scenarios. Test data will be created based on a user action sequence and it is also possible to test multiple features together which comes under same sequence. This minimized the setup and teardown needed for each atomic test level but adds additional dependency on the tests sequence. We may not be able to validate those tests independently. One test failure may also result in failure of the entire sequence.

#Day_08

- Is the scenario feasible for replicating in an automated manner?

- Is the application we are going to test stable enough for starting with automation?

- Is the scenario to be automated helps in smoke/regression testing?

- Is the scenario to be automated takes more time when we perform the check without tool?

- Is the scenario to be automated needs to be verified across multiple set of data across different environments/platforms/browsers?

I was focusing more on automating test case by test case in a black box way (UI/API – end to end layer). With time and experience, I realised that I was missing the main purpose of automation. I was able to achieving short term goals, but the approach followed was not scalable from a long term prospective. Despite using tools to generate automated tests and test data, the automation backlog was never ending.

Today I would like to share a revised check list which can be used before starting automation in a project based on my experience so far:

- Focus should be more on certifying the requirements in an automated way not just the test cases.

- Have a strategy ready to support automation activities if application is not stable, backend is not available or under development or 3rd party is not available scenarios.

- Testing pyramid helps to get a balanced outcome of automation by carefully selecting tests to be automated across different layers like unit, integration, and end to end.

- Quality models can help to identify good automation candidates from different types of testing not just the functional checks like performance, security, accessibility etc.

- Selection of tools/frameworks should be as per product tech stack. This can help both developers and tests to collaborate in effectively. We are in the era of micros services and micro frontends. Selecting a single tool or framework for the entire project may not be scalable in most of the cases.

- Running all the automated tests in CI should be the goal from day one.

- What to be automated and in which layer should be part of definition of done of each user story.

- We need good collaboration between business and entire agile team to achieve automation goals.

- Get buy in from business stakeholders and measure periodically the progress.

#Day_09

- First step will be always reproducing the issue consistently by applying the same steps and input.

- Identify the first level source of the issue – for example in a web application find out if the issue is due to frontend code or backend code or database issue or 3rd party problem or due to environment related.

- If we are sure issue is coming from the frontend, chrome dev tool can be handy for debugging. We can add break points in any files in sources panel, look for console messages. We can also use command menu (ctrl/Cmd + Shift + P) to select variety of functions to perform on the frontend for debugging purpose.

- For backend API issue, we need to depend on the request and response data and application level logs. It’s a good practice to have a look in the response status code, data to identify if issue is due to auth, bad requests or server side errors. Together with this looking into the actual code level logs in monitoring applications like Splunk or Datadog can give more insight about the issue. Its also worth looking into session parameters, headers, cookies to get additional information.

- Issues can be also due to hardware/software/browser version/configurations. So it’s also important to pay attention to the environment used for reproducing and debugging the issue. Issues can come due to infra layer like not receiving 3rd part response due to missing IP whitelisting.

- Sometime getting another prospective about the issue also helps in debugging. Instead of debugging alone, paring with another tester or developer can bring more insight into the entire process.

- We may not be lucky enough in reproducing intermittent issue by following the exact steps. In case of web application testing, we can use tools to record the steps we are performing so that in case of any issue we can playback and find out the actual steps and discard the remaining recording. It's also a good idea to have a look into the application logs for occurrence of errors/alerts/exceptions during the time period we found the issue for the first time. In case of mobile app, cleaning the log files before testing and pulling the copy of it after testing completes can help for debugging purpose.

#Day_10

- The application is hosted in the cloud, and the tester is responsible for deploying and testing it in different environments. Before commencing testing, a tester should know which environment (Example: EC2 instance in AWS) to connect and deploy the changes. It can save a lot of time if we know how to access virtual compute services, and how to debug and troubleshoot if anything goes wrong. For example: suppose that we have deployed the application successfully, but application is not launched properly. Next step will be to troubleshoot what went wrong? Which part of the application has the issue? Is frontend having problem or backend or database? Prior cloud knowledge can help us to perform debugging effectively.

- In the cloud, applications can take advantage of different related services, such as messaging services, database services, streaming services etc. For example, knowing how to connect to a queue in cloud (example SQS service in AWS) and performing basic operation like checking received messages and sending/receiving messages can be very handy while testing the application. In a similar way, if we know how to connect to a DB in cloud along with basic security settings (inboud/outbound IM group policies) can be helpful to speed up the testing process.

- Setting up test automation infra in cloud can be another use case here. There are many ways to achieve this, and different clouds provide different services for the same. For example, we can perform serverless UI test execution using selenium, AWS Lamdba, AWS Fargate and AWS developers tools. AWS device farm can be used cross browser or cross device test execution in the cloud.

- Monitoring is must for every cloud application, so knowledge of cloud-based monitoring tools like Splunk, New Relic, Datadog etc. should also be part of testers skillset. While testing the application level errors/exceptions/alerts this is very useful. Another use case could be getting actual product usage patterns from those tools to create risk based testing strategy.

- We may also observe issues related to performance and security of the application hosted in cloud. Having knowledge about the cloud infra layer configurations, permissions, security groups etc can help to trouble shoot those areas.

#Day_11

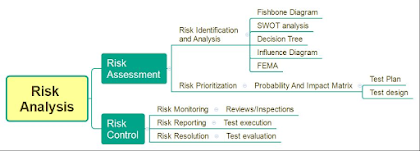

In this post, I would like to summarise my understanding on risk analysis. The motivation for performing risk analysis during product development is to understand what can go wrong with the product much before it is implemented. Defects in production can be very costly. Performing a risk analysis can help identify areas where software flaws may cause serious issues. Identification of risk items and priority setting can help developers apply remedies proactively before damage is done. As a result of the risk assessment process, the testers can come up with a risk impact matrix that defines what is relevant to include in the testing scope. This can be an important input for test planning and design. Reviewing, executing, and evaluating test results are all part of risk control.

- User privacy

- Security and integrity of user data

- Compliance guidelines

- Performance SLAs

- Exposure of confidential data

- Backup and recovery

- Dependency on other software/hardware

#Day_12

Infrastructure Testing

Do we need to test deployment separately? I do advocate for at least basic level of infra testing before accepting any build due to the following reasons:

- Most of modern applications comes up with many moving components like vms, containers, security components, networking components, monitors, data pipelines, databases, object stores etc with additional risk associated with each component.

- We do have multiple environment like dev, stage, prod and deployment is applicable for all the different environments we are managing.

- Single application can have multiple configurations for example admin, buyer, and seller apps for an ecommerce application.

- Tested infra code can prevent and detect unexpected issues faster.

- Testing the infrastructure can save time of all the teams involved though out the process.

Let’s see some simple examples of different types of testing we can perform on infra code.

Pre deployment test examples:

- Static analysis for checking syntactic and structural issues (terraform validate, kubectl etc)

- Linters to check for common errors (terraform conftest)

- Dry run by partially executing the deployment code without real deployment (terraform plan)

Deployment tests examples:

- Unit testing: In the infra side a typical unit test will be deploy the infra, validate it and undeploy the infra at the end. Tools like terratest can facilitate this entire process.

- Integration testing: Like the application code, infra code also can have dependencies on other infra code/module. We need to test those as well to mitigate integration errors early.

- End to end tests: Here we test the entire infra together. This is the most time-consuming layer of testing. For example: if a EC2 instance has 1 % chance of failure in unit level, the rate will increase to higher % at the integration and end to end layer due to increasing number of instances.

Post deployment smoke tests examples:

- All the servers are up and running (ping should work)

- Application websites are reachable.

- All the Databases are up and running.

- Messaging infra (message broker like MQTT, kafka etc) is up and running.

- IP whitelisting/blacklisting works as expected.

- Monitors can connect to the needed servers.

https://www.linkedin.com/posts/dimpy-adhikary_21days21tips-testinginfra-activity-7049358333031436288-ieyd?utm_source=share&utm_medium=member_desktop

Poor Product Quality

How do you identify symptoms of poor product quality?

Here is my go-to checklist:

- Regression defects keeps coming.

- Customer not happy with the product quality and keeps complaining about the same.

- Longer feedback loop.

- Team is not ready to take ownership of testing, blame game continues.

- Every release is stressful.

- No quality goal/vision defined by leadership.

- Testers and developers work in silos most of time.

- Stories are made done without proper testing (functional/automation)

- Testing/automation strategy missing as part of release/project plan.

- Team does not have skills/knowledge/experience developing automated test suites.

- Tests are not part of CI process.

- Defects are not picked/analysed as soon as detected.

- Not following process/best practices.

- Not much knowledge sharing happens internally, team is not motivated enough.

- Tech debt keeps increasing.

- No proper planning and unrealistic estimation.

- Using irrelevant metrics to measure progress.

#Day_14

Quality Metrics

- Tracking the progress overall.

- Take corrective actions as needed.

- Take informed decisions.

Dashboards:

- Quality dashboard (Execution status of Functional tests, Non-Functional tests, Code coverage)

- Defect dashboard (RCAs, trends)

- Execution time per test suite and per environment.

- Time saved while executing regression testing due to automated test execution.

- Defects discovered in different levels of testing as per the test pyramid.

- Defects discovered by severity/environments/functional testing/automated execution.

- No. of new tests added/automated.

- Percentage execution completed vs remaining.

- Average age of defect being alive in the system.

- No. of production defects and frequency.

- Time spent fixing automated test failures.

#Day_15

Are you still looking for another project’s test plan template before starting to create one for yourself? If the answer is yes, time to change your approach, mindset and bring some changes/creativity into the entire process. Two applications may have similar features but that does not mean they both will be having same context. While creating test plan it’s important to understand the application we are testing and the context of its usage. More than following a fixed template, create a new one which is more relevant, flexible, and useful for the team.

- Information about the project/application under test (domain/technical and relevant context)

- Information about the target users

- Scope of testing

- Not in scope

- Timelines

- Test pyramid

- Test types

- Test execution and release planning

- Automation plan

- Tools/frameworks to be used.

- Risks and mitigation plans

- Processes to be followed (workflows for epics/stories/tasks and defects lifecycle and continuous testing)

- Reporting mechanisms to be used.

- Metrics to be measured.

- Discuss and brainstorm with the team.

- Come up with a common understanding of how to perform testing throughout.

- Create it using any format/tool the team is comfortable with.

- Review and incorporate feedbacks.

- Make it a live document and keep updating it as and when development is progressing.

#Day_16

How to be relevant in today’s world when technology changes quite rapidly? Sharing something I do follow for keeping myself updated here:

- Create a plan and be consistent. Accept the fact that we cannot learn everything. But being consistent will help to learn what makes sense to us in a way we can.

- Make sure your plan has variety of topics added not just technical skill. Adding soft skills and hobbies part of your leaning plan can be really motivating.

- Do not compare yourself with others but do keep a check on your own progress. This will help to keep the ball rolling in the right direction.

- Keep a reading list/separate bookmark of things you would like to explore. Keeping the list on paper book can be a creative way of reminding yourself about your goals daily.

- Earn a certificate or enrol for a training course for achieving short /long term goals and be ready to invest both time and money on your personal aspirations. There are many free resources available on the internet but experience from a skilled mentor can make the real difference and speed up the learning process. Do enough research before selecting any course or training program for yourself. The certificate/trainer/YouTube video/blog works for your friend may not work for you or vice versa. Trainer can provide you the needed guidance, but it’s just you who needs to put the efforts to reach your goal. Instead of getting into the arguments of which certificate is best or which is not, just select the one you think best for you based on your research.

- Align learning aspirations with on job responsibilities. Look for opportunities and perform a self-signup. Example: How to apply the skill you have learnt from a recent meet-up to solve a day-to-day problem in work? You are up skilling on new technology and your team is discussing about adapting the same as a long-term goal. Do pitch in and provide your valuable input by being proactive.

- Work on a pet project to apply your leanings and get it to next level. Share your knowledge with others. Give presentations, you are going learn more throughout the process.

- Learning is a journey, choose your path and destination wisely.

#Day_17

How are you managing test data in your project? This process can be quite complex depending upon the product landscape. Organizations can discover and correct defects earlier in the development process when they have a better test data management strategy. Would like to share few pointers to be considered while creating the test data management strategy:

- Start with discovering the data need. While performing testing we may need different varieties of data in different stages. For example data needed for requirement validation may be different than the actual test execution. Unit/integration layer testing may need different test data than end to end tests. Also based on environment (staging, UAT and prod) we test data may be different. Cross functional testing like performance, load, security may need different data strategy altogether.

- While preparing data we can follow multiple approaches like cloning data from prod, sub setting data from multiple data sources, creating data from scratch or generating data on the fly when needed. I would prefer to go ahead with a hybrid approach here like sub setting a portion of prod data so that data quality and referential integrity remains intact along with gives some flexibility in terms of customising the cloned data based on different business rules and testing needs.

- We need to make sure the data we copied or created don’t not have any confidential information of real user. Data should be realistic with masking enabled for the needed fields.

- We should have mechanism to classify the data generated into different feeds which can be used by different teams as per the testing need. For example : we need testing data for creating one complete order, the order data feed should provide that with ease which can be used in both functional as well as automated testing.

- Refresh and sync is another important aspect of test data management strategy. We should be able to roll back to the previous state of test data after testing completes. We should be able refresh data component wise based on specific use case without refreshing the entire test data.

- Validate the data to be used for testing after generation in an automated way can help to make sure we are using right kind of data.

#Day_18

Are you looking for new career opportunities this year? It is important to prepare well to avoid missing the right opportunity at the right time. There is a great deal of variation in hiring trends and the economy, but that does not mean that we can land into something we really want and aspire to without preparation.

The following are some important tips from my experience to keep in mind when you are looking for a job:

- You should be clear about what you expect from your next role. Unless you know what you want, you may end up going around in circles without getting anywhere. Be open and honest with your hiring manager/HR about this so that everyone is on the same page.

- Take the time to create a plan and evaluate your own strengths and weaknesses. This will help you to pay attention to needed areas.

- Explore some time analysing job descriptions posted for similar kind of openings you are interested in. This will help you strategise your preparation in a right direction based on the job expectations for a certain position.

- Make your resume stand out by preparing it well. The resume should be customisable as per job description alignment.

- Prepare your work portfolio – personal GitHub account, websites, pet projects, links to public talks etc. This can give good overview about your background to the recruiter.

- Attend some mock interviews before the actual ones, this will boast your confidence.

- Apply via referral, connect with hiring person directly via social media. The chance of getting an interview call is always more via referral.

- Finally, just be urself while attending any interview. Ask counter questions if you are not able understand what the interviewer is expecting.

- Don’t get frustrated due to rejection. Every interview is a learning process, be positive and move on irrespective of the end result

#Day_19

Do you find it frustrating to analyse and fix flaky automated tests? There is no easy way to deal with flaky tests, especially when there are a large number of them.

Flaky tests can cause the following pain points:

- Reduce confidence on the overall test results.

- Adds additional maintenance of debugging and fixing the tests.

- Slows down the execution and feedback loop.

- Not handling states needed for any tests properly. Without proper setup and teardown, the state change made by any test can have impact on the next test to be executed.

- Change in application business logic (addition/deletion of flows/steps)

- Change in application handlers which tests are using for example UI locators.

- Unresponsiveness of the application (hangs/crash)

- Dependencies due to 3rd parties (contract change/response delay)

- Network issues, resource utilisation issues etc.

- Add necessary setup/teardown steps in each test so that each test can start smoothly.

- Add proper abstraction for separating tests and business logic, so that change in business logic can be mitigated with minimum maintenance.

- Handle sync issues with care based on analysis and adapt the necessary changes.

- Use test pyramid to keep a balance between the number of tests in different layers, making sure tests are not repeated in each layer.

- Have a mechanism to classify the test failures in suitable buckets like application failure and others reason. This will help to take necessary actions quicker.

- Test 3rd party dependencies using mocks wherever possible.

- Remove flaky tests automatically from main pipeline to alternate one after few times of inconsistent results. Move it back to main pipeline once test is fixed and stabilised.

Quality Engineering Principles

Through continuous process improvement and customer satisfaction, quality has become a critical component of long-term success for any business. Sharing few quality Engineering core principles that can help ensuring delivery of high quality product:

- Quality is whole team’s responsibility, testers can only advocate for quality but they do not own quality.

- Setting up quality mindset and bring right kind of focus to quality. Everyone in the team should understand the quality goal and work together achieving the same.

- Automation thinking is critical, not just automating test cases but bringing in right kind of automation strategy which can help providing faster feedback, reduce test execution time, remove redundancy, improve productivity and create smooth path to production. Investment in right kind of tools and frameworks is crucial, which can bring noticeable change in the overall engineering process. Example: Using artificial intelligence and machine learning to collect, analyse and predict failure points as a feedback mechanism for the entire engineering process.

- Effective collaboration, no more working in silos. Shifting left in real sense, testers getting involved in each and every phase of development not just testing.

- Collect and measure right kind of metrics to get an holistic view of quality not just at the surface level. Process, product and people (3P) are the three key elements of a quality system and all of them need to be focused on for an overall improvement.

- Use RCAs as guiding factor for taking decision.

Testing Logs

- checking in monitoring application that alert has been triggered (datadog/splunk)

- checking the business logic based on which alert has been triggered.

- checking the alert severity as per the defined rules

- checking the alert frequency

- checking the alert is delivered to configured sources like email/sms etc

- checking the relevancy of the log content.

- relevancy and completeness of content added in the log.

- presence of any confidential information like PCI data etc.

- removal of logs added for local debugging purpose.